Geographic information system

A geographic information system, geographical information science, or geospatial information studies is a system designed to capture, store, manipulate, analyze, manage, and present all types of geographically referenced data.[1] In the simplest terms, GIS is the merging of cartography, statistical analysis, and database technology.

A GIS can be thought of as a system—it digitally creates and "manipulates" spatial areas that may be jurisdictional, purpose or application-oriented for which a specific GIS is developed. Hence, a GIS developed for an application, jurisdiction, enterprise or purpose may not be necessarily interoperable or compatible with a GIS that has been developed for some other application, jurisdiction, enterprise, or purpose. What goes beyond a GIS is a spatial data infrastructure (SDI), a concept that has no such restrictive boundaries.

Therefore, in a general sense, the term describes any information system that integrates, stores, edits, analyzes, shares and displays geographic information for informing decision making. The term GIS-centric, however, has been specifically defined as the use of the Esri ArcGIS geodatabase as the asset/feature data repository central to computerized maintenance management system (CMMS) as a part of enterprise asset management and analytical software systems. GIS-centric certification criteria has been specifically defined by NAGCS, the National Association of GIS-Centric Solutions. http://www.nagcs.org/index.asp GIS applications are tools that allow users to create interactive queries (user-created searches), analyze spatial information, edit data, maps, and present the results of all these operations.[2] Geographic information science is the science underlying the geographic concepts, applications and systems.[3]

Applications

GIS technology can be used for:

- earth surface-based scientific investigations;

- resource management

- reference and projections of a geospatial nature, both artificial and natural;

- asset management and location planning

- archaeology;

- environmental impact-assessment;

- infrastructure assessment and development;

- urban planning and regional planning;

- cartography, for a thematic and/or time-based purpose;

- criminology;

- geospatial intelligence;

- GIS data development;

- geographic history;

- marketing (also see Geo (marketing));

- logistics;

- population and demographic studies;

- public health planning.

- prospectivity mapping;

- statistical analysis;

- GIS in environmental contamination;

- Disease surveillance;

- military planning.

- utility and analysis applications;

- high consequence area (HCA) analysis;

- outage and trouble call management;

- Damage Prevention;

- Engineering Analysis.

Examples of use are:

- GIS may allow emergency planners to easily calculate emergency response times and the movement of response resources (for logistics) in the case of a natural disaster;

- GIS might be used to find wetlands that need protection strategies regarding pollution;

- GIS can be used by a company to site a new business location to take advantage of GIS data identified trends to respond to a previously under-served market. Most city and transportation systems planning offices have GIS sections; and

- GIS can be used to track the spread of emerging infectious disease threats. This allows for informed pandemic planning and enhanced preparedness.

- GIS can be used by utility integrity management personnel to determine high consequence areas in the event of catastrophic infrastructure or integrity failures within populated sensitive areas.

History of development

In 1854, John Snow depicted a cholera outbreak in London using points to represent the locations of some individual cases, possibly the earliest use of the geographic method.[4] His study of the distribution of cholera led to the source of the disease, a contaminated water pump (the Broad Street Pump, whose handle he had disconnected, thus terminating the outbreak) within the heart of the cholera outbreak. While the basic elements of topography and theme existed previously in cartography, the John Snow map was unique, using cartographic methods not only to depict but also to analyze clusters of geographically-dependent phenomena for the first time.

The early 20th century saw the development of photozincography, which allowed maps to be split into layers, for example one layer for vegetation and another for water. This was particularly used for printing contours – drawing these was a labour intensive task but having them on a separate layer meant they could be worked on without the other layers to confuse the draughtsman. This work was originally drawn on glass plates but later, plastic film was introduced, with the advantages of being lighter, using less storage space and being less brittle, among others. When all the layers were finished, they were combined into one image using a large process camera. Once colour printing came in, the layers idea was also used for creating separate printing plates for each colour. While the use of layers much later became one of the main typical features of a contemporary GIS, the photographic process just described is not considered to be a GIS in itself – as the maps were just images with no database to link them to.

Computer hardware development spurred by nuclear weapon research led to general-purpose computer 'mapping' applications by the early 1960s.[5]

The year 1960 saw the development of the world's first true operational GIS in Ottawa, Ontario, Canada by the federal Department of Forestry and Rural Development. Developed by Dr. Roger Tomlinson, it was called the Canada Geographic Information System (CGIS) and was used to store, analyze, and manipulate data collected for the Canada Land Inventory (CLI) – an effort to determine the land capability for rural Canada by mapping information about soils, agriculture, recreation, wildlife, waterfowl, forestry and land use at a scale of 1:50,000. A rating classification factor was also added to permit analysis.

CGIS was an improvement over 'computer mapping' applications as it provided capabilities for overlay, measurement and digitizing/scanning. It supported a national coordinate system that spanned the continent, coded lines as arcs having a true embedded topology and it stored the attribute and locational information in separate files. As a result of this, Tomlinson has become known as the 'father of GIS', particularly for his use of overlays in promoting the spatial analysis of convergent geographic data.[6]

CGIS lasted into the 1990s and built a large digital land resource database in Canada. It was developed as a mainframe-based system in support of federal and provincial resource planning and management. Its strength was continent-wide analysis of complex datasets. The CGIS was never available in a commercial form.

In 1964, Howard T. Fisher formed the Laboratory for Computer Graphics and Spatial Analysis at the Harvard Graduate School of Design (LCGSA 1965–1991), where a number of important theoretical concepts in spatial data handling were developed, and which by the 1970s had distributed seminal software code and systems, such as 'SYMAP', 'GRID' and 'ODYSSEY' – that served as sources for subsequent commercial development—to universities, research centers and corporations worldwide.[7]

By the early 1980s, M&S Computing (later Intergraph)along with Bentley Systems Incorporated for the CAD platform, Environmental Systems Research Institute (ESRI), CARIS (Computer Aided Resource Information System) and ERDAS (Earth Resource Data Analysis System) emerged as commercial vendors of GIS software, successfully incorporating many of the CGIS features, combining the first generation approach to separation of spatial and attribute information with a second generation approach to organizing attribute data into database structures. In parallel, the development of two public domain systems began in the late 1970s and early 1980s.[8]

The Map Overlay and Statistical System (MOSS) project started in 1977 in Fort Collins, Colorado under the auspices of the Western Energy and Land Use Team (WELUT) and the US Fish and Wildlife Service. GRASS GIS was introduced in 1982 by the US Army Corps of Engineering Research Laboratory (USA-CERL) in Champaign, Illinois, a branch of the US Army Corps of Engineers to meet the need of the US military for software for land management and environmental planning.

In the later 1980s and 1990s, industry growth was spurred on by the growing use of GIS on Unix workstations and the personal computer. By the end of the 20th century, the rapid growth in various systems had been consolidated and standardized on relatively few platforms and users were beginning to explore the concept of viewing GIS data over the Internet, requiring data format and transfer standards. More recently, a growing number of free, open-source GIS packages run on a range of operating systems and can be customized to perform specific tasks. Increasingly geospatial data and mapping applications are being made available via the world wide web.[9]

Several authoritative books on the history of GIS have been published.[10][11]

GIS techniques and technology

Modern GIS technologies use digital information, for which various digitized data creation methods are used. The most common method of data creation is digitization, where a hard copy map or survey plan is transferred into a digital medium through the use of a computer-aided design (CAD) program, and geo-referencing capabilities. With the wide availability of ortho-rectified imagery (both from satellite and aerial sources), heads-up digitizing is becoming the main avenue through which geographic data is extracted. Heads-up digitizing involves the tracing of geographic data directly on top of the aerial imagery instead of by the traditional method of tracing the geographic form on a separate digitizing tablet (heads-down digitizing).

Relating information from different sources

GIS uses spatio-temporal (space-time) location as the key index variable for all other information. Just as a relational database containing text or numbers can relate many different tables using common key index variables, GIS can relate otherwise unrelated information by using location as the key index variable. The key is the location and/or extent in space-time.

Any variable that can be located spatially, and increasingly also temporally, can be referenced using a GIS. Locations or extents in Earth space–time may be recorded as dates/times of occurrence, and x, y, and z coordinates representing, longitude, latitude, and elevation, respectively. These GIS coordinates may represent other quantified systems of temporo-spatial reference (for example, film frame number, stream gage station, highway mile-marker, surveyor benchmark, building address, street intersection, entrance gate, water depth sounding, POS or CAD drawing origin/units). Units applied to recorded temporal-spatial data can vary widely (even when using exactly the same data, see map projections), but all Earth-based spatial–temporal location and extent references should, ideally, be relatable to one another and ultimately to a "real" physical location or extent in space–time.

Related by accurate spatial information, an incredible variety of real-world and projected past or future data can be analyzed, interpreted and represented to facilitate education and decision making.[12] This key characteristic of GIS has begun to open new avenues of scientific inquiry into behaviors and patterns of previously considered unrelated real-world information.

GIS uncertainties

GIS accuracy depends upon source data, and how it is encoded to be data referenced. Land surveyors have been able to provide a high level of positional accuracy utilizing the GPS derived positions.[13] [Retrieved from Federal Geographic Data Committee] the high-resolution digital terrain and aerial imagery,[14] [Retrieved NJGIN] the powerful computers, Web technology, are changing the quality, utility, and expectations of GIS to serve society on a grand scale, but nevertheless there are other source data that has an impact on the overall GIS accuracy like: paper maps that are not found to be very suitable to achieve the desired accuracy since the aging of maps affects their dimensional stability.

In developing a digital topographic data base for a GIS, topographical maps are the main source of data. Aerial photography and satellite images are extra sources for collecting data and identifying attributes which can be mapped in layers over a location facsimile of scale. The scale of a map and geographical rendering area representation type are very important aspects since the information content depends mainly on the scale set and resulting locatability of the map's representations. In order to digitize a map, the map has to be checked within theoretical dimensions, then scanned into a raster format, and resulting raster data has to be given a theoretical dimension by a rubber sheeting/warping technology process.

Uncertainty is a significant problem in designing a GIS because spatial data tend to be used for purposes for which they were never intended. Some maps were made many decades ago, where at that time the computer industry was not even in its perspective establishments. This has led to historical reference maps without common norms. Map accuracy is a relative issue of minor importance in cartography. All maps are established for communication ends. Maps use a historically constrained technology of pen and paper to communicate a view of the world to their users. Cartographers feel little need to communicate information based on accuracy, for when the same map is digitized and input into a GIS, the mode of use often changes. The new uses extend well beyond a determined domain for which the original map was intended and designed.

A quantitative analysis of maps brings accuracy issues into focus. The electronic and other equipment used to make measurements for GIS is far more precise than the machines of conventional map analysis.[15] [Retrieved USGS]. The truth is that all geographical data are inherently inaccurate, and these inaccuracies will propagate through GIS operations in ways that are difficult to predict, yet have goals of conveyance in mind for original design. Accuracy Standards for 1:24000 Scales Map: 1:24,000 ± 40.00 feet

This means that when we see a point or attribute on a map, its "probable" location is within a +/- 40 foot area of its rendered reference, according to area representations and scale.

A GIS can also convert existing digital information, which may not yet be in map form, into forms it can recognize, employ for its data analysis processes, and use in forming mapping output. For example, digital satellite images generated through remote sensing can be analyzed to produce a map-like layer of digital information about vegetative covers on land locations. Another fairly recently developed resource for naming GIS location objects is the Getty Thesaurus of Geographic Names (GTGN), which is a structured vocabulary containing about 1,000,000 names and other information about places.[16]

Likewise, researched census or hydrological tabular data can be displayed in map-like form, serving as layers of thematic information for forming a GIS map.

Data representation

GIS data represents real objects (such as roads, land use, elevation, trees, waterways, etc.) with digital data determining the mix. Real objects can be divided into two abstractions: discrete objects (e.g., a house) and continuous fields (such as rainfall amount, or elevations). Traditionally, there are two broad methods used to store data in a GIS for both kinds of abstractions mapping references: raster images and vector. Points, lines, and polygons are the stuff of mapped location attribute references. A new hybrid method of storing data is that of identifying point clouds, which combine three-dimensional points with RGB information at each point, returning a "3D color image". GIS Thematic maps then are becoming more and more realistically visually descriptive of what they set out to show or determine.

Raster

A raster data type is, in essence, any type of digital image represented by reducible and enlargeable grids. Anyone who is familiar with digital photography will recognize the Raster graphics pixel as the smallest individual grid unit building block of an image, usually not readily identified as an artifact shape until an image is produced on a very large scale. A combination of the pixels making up an image color formation scheme will compose details of an image, as is distinct from the commonly used points, lines, and polygon area location symbols of scalable vector graphics as the basis of the vector model of area attribute rendering. While a digital image is concerned with its output blending together its grid based details as an identifiable representation of reality, in a photograph or art image transferred into a computer, the raster data type will reflect a digitized abstraction of reality dealt with by grid populating tones or objects, quantities, cojoined or open boundaries, and map relief schemas. Aerial photos are one commonly used form of raster data, with one primary purpose in mind: to display a detailed image on a map area, or for the purposes of rendering its identifiable objects by digitization. Additional raster data sets used by a GIS will contain information regarding elevation, a digital elevation model, or reflectance of a particular wavelength of light, Landsat, or other electromagnetic spectrum indicators.

Raster data type consists of rows and columns of cells, with each cell storing a single value. Raster data can be images (raster images) with each pixel (or cell) containing a color value. Additional values recorded for each cell may be a discrete value, such as land use, a continuous value, such as temperature, or a null value if no data is available. While a raster cell stores a single value, it can be extended by using raster bands to represent RGB (red, green, blue) colors, colormaps (a mapping between a thematic code and RGB value), or an extended attribute table with one row for each unique cell value. The resolution of the raster data set is its cell width in ground units.

Raster data is stored in various formats; from a standard file-based structure of TIF, JPEG, etc. to binary large object (BLOB) data stored directly in a relational database management system (RDBMS) similar to other vector-based feature classes. Database storage, when properly indexed, typically allows for quicker retrieval of the raster data but can require storage of millions of significantly sized records.

Vector

In a GIS, geographical features are often expressed as vectors, by considering those features as geometrical shapes. Different geographical features are expressed by different types of geometry:

- Zero-dimensional points are used for geographical features that can best be expressed by a single point reference—in other words, by simple location. Examples include wells, peaks, features of interest, and trailheads. Points convey the least amount of information of these file types. Points can also be used to represent areas when displayed at a small scale. For example, cities on a map of the world might be represented by points rather than polygons. No measurements are possible with point features.

- Lines or polylines

- One-dimensional lines or polylines are used for linear features such as rivers, roads, railroads, trails, and topographic lines. Again, as with point features, linear features displayed at a small scale will be represented as linear features rather than as a polygon. Line features can measure distance.

- Two-dimensional polygons are used for geographical features that cover a particular area of the earth's surface. Such features may include lakes, park boundaries, buildings, city boundaries, or land uses. Polygons convey the most amount of information of the file types. Polygon features can measure perimeter and area.

Each of these geometries are linked to a row in a database that describes their attributes. For example, a database that describes lakes may contain a lake's depth, water quality, pollution level. This information can be used to make a map to describe a particular attribute of the dataset. For example, lakes could be coloured depending on level of pollution. Different geometries can also be compared. For example, the GIS could be used to identify all wells (point geometry) that are within one kilometre of a lake (polygon geometry) that has a high level of pollution.

Vector features can be made to respect spatial integrity through the application of topology rules such as 'polygons must not overlap'. Vector data can also be used to represent continuously varying phenomena. Contour lines and triangulated irregular networks (TIN) are used to represent elevation or other continuously changing values. TINs record values at point locations, which are connected by lines to form an irregular mesh of triangles. The face of the triangles represent the terrain surface.

Advantages and disadvantages

There are some important advantages and disadvantages to using a raster or vector data model to represent reality:

- Raster datasets record a value for all points in the area covered which may require more storage space than representing data in a vector format that can store data only where needed.

- Raster data is computationally less expensive to render than vector graphics

- There are transparency and aliasing problems when overlaying multiple stacked pieces of raster images

- Vector data allows for visually smooth and easy implementation of overlay operations, especially in terms of graphics and shape-driven information like maps, routes and custom fonts, which are more difficult with raster data.

- Vector data can be displayed as vector graphics used on traditional maps, whereas raster data will appear as an image that may have a blocky appearance for object boundaries. (depending on the resolution of the raster file)

- Vector data can be easier to register, scale, and re-project, which can simplify combining vector layers from different sources.

- Vector data is more compatible with relational database environments, where they can be part of a relational table as a normal column and processed using a multitude of operators.

- Vector file sizes are usually smaller than raster data, which can be tens, hundreds or more times larger than vector data (depending on resolution).

- Vector data is simpler to update and maintain, whereas a raster image will have to be completely reproduced. (Example: a new road is added).

- Vector data allows much more analysis capability, especially for "networks" such as roads, power, rail, telecommunications, etc. (Examples: Best route, largest port, airfields connected to two-lane highways). Raster data will not have all the characteristics of the features it displays.

Non-spatial data

Additional non-spatial data can also be stored along with the spatial data represented by the coordinates of a vector geometry or the position of a raster cell. In vector data, the additional data contains attributes of the feature. For example, a forest inventory polygon may also have an identifier value and information about tree species. In raster data the cell value can store attribute information, but it can also be used as an identifier that can relate to records in another table.

Software is currently being developed to support spatial and non-spatial decision-making, with the solutions to spatial problems being integrated with solutions to non-spatial problems. The end result with these flexible spatial decision-making support systems (FSDSSs)[17] is expected to be that non-experts will be able to use GIS, along with spatial criteria, and simply integrate their non-spatial criteria to view solutions to multi-criteria problems. This system is intended to assist decision-making.

Data capture

Data capture—entering information into the system—consumes much of the time of GIS practitioners. There are a variety of methods used to enter data into a GIS where it is stored in a digital format.

Existing data printed on paper or PET film maps can be digitized or scanned to produce digital data. A digitizer produces vector data as an operator traces points, lines, and polygon boundaries from a map. Scanning a map results in raster data that could be further processed to produce vector data.

Survey data can be directly entered into a GIS from digital data collection systems on survey instruments using a technique called coordinate geometry (COGO). Positions from a global navigation satellite system (GNSS) like Global Positioning System (GPS), another survey tool, can also be collected and then imported into a GIS. A current trend in data collection gives users the ability to utilize field computers with the ability to edit live data using wireless connections or disconnected editing sessions. This has been enhanced by the availability of low cost mapping grade GPS units with decimeter accuracy in real time. This eliminates the need to post process, import, and update the data in the office after fieldwork has been collected. This includes the ability to incorporate positions collected using a laser rangefinder. New technologies also allow users to create maps as well as analysis directly in the field, making projects more efficient and mapping more accurate.

Remotely sensed data also plays an important role in data collection and consist of sensors attached to a platform. Sensors include cameras, digital scanners and LIDAR, while platforms usually consist of aircraft and satellites. Recently with the development of Miniature UAVs, aerial data collection is becoming possible at much lower costs, and on a more frequent basis. For example, the Aeryon Scout was used to map a 50 acre area with a Ground sample distance of 1 inch (2.54 cm) in only 12 minutes.[18]

The majority of digital data currently comes from photo interpretation of aerial photographs. Soft-copy workstations are used to digitize features directly from stereo pairs of digital photographs. These systems allow data to be captured in two and three dimensions, with elevations measured directly from a stereo pair using principles of photogrammetry. Currently, analog aerial photos are scanned before being entered into a soft-copy system, but as high quality digital cameras become cheaper this step will be skipped.

Satellite remote sensing provides another important source of spatial data. Here satellites use different sensor packages to passively measure the reflectance from parts of the electromagnetic spectrum or radio waves that were sent out from an active sensor such as radar. Remote sensing collects raster data that can be further processed using different bands to identify objects and classes of interest, such as land cover.

When data is captured, the user should consider if the data should be captured with either a relative accuracy or absolute accuracy, since this could not only influence how information will be interpreted but also the cost of data capture.

In addition to collecting and entering spatial data, attribute data is also entered into a GIS. For vector data, this includes additional information about the objects represented in the system.

After entering data into a GIS, the data usually requires editing, to remove errors, or further processing. For vector data it must be made "topologically correct" before it can be used for some advanced analysis. For example, in a road network, lines must connect with nodes at an intersection. Errors such as undershoots and overshoots must also be removed. For scanned maps, blemishes on the source map may need to be removed from the resulting raster. For example, a fleck of dirt might connect two lines that should not be connected.

Raster-to-vector translation

Data restructuring can be performed by a GIS to convert data into different formats. For example, a GIS may be used to convert a satellite image map to a vector structure by generating lines around all cells with the same classification, while determining the cell spatial relationships, such as adjacency or inclusion.

More advanced data processing can occur with image processing, a technique developed in the late 1960s by NASA and the private sector to provide contrast enhancement, false colour rendering and a variety of other techniques including use of two dimensional Fourier transforms.

Since digital data is collected and stored in various ways, the two data sources may not be entirely compatible. So a GIS must be able to convert geographic data from one structure to another.

Projections, coordinate systems and registration

A property ownership map and a soils map might show data at different scales. Map information in a GIS must be manipulated so that it registers, or fits, with information gathered from other maps. Before the digital data can be analyzed, they may have to undergo other manipulations—projection and coordinate conversions, for example—that integrate them into a GIS.

The earth can be represented by various models, each of which may provide a different set of coordinates (e.g., latitude, longitude, elevation) for any given point on the Earth's surface. The simplest model is to assume the earth is a perfect sphere. As more measurements of the earth have accumulated, the models of the earth have become more sophisticated and more accurate. In fact, there are models that apply to different areas of the earth to provide increased accuracy (e.g., North American Datum, 1927 – NAD27 – works well in North America, but not in Europe). See datum (geodesy) for more information.

Projection is a fundamental component of map making. A projection is a mathematical means of transferring information from a model of the Earth, which represents a three-dimensional curved surface, to a two-dimensional medium—paper or a computer screen. Different projections are used for different types of maps because each projection particularly suits specific uses. For example, a projection that accurately represents the shapes of the continents will distort their relative sizes. See Map projection for more information.

Since much of the information in a GIS comes from existing maps, a GIS uses the processing power of the computer to transform digital information, gathered from sources with different projections and/or different coordinate systems, to a common projection and coordinate system. For images, this process is called rectification.

Spatial analysis with GIS

Given the vast range of spatial analysis techniques that have been developed over the past half century, any summary or review can only cover the subject to a limited depth. This is a rapidly changing field, and GIS packages are increasingly including analytical tools as standard built-in facilities or as optional toolsets, add-ins or 'analysts'. In many instances such facilities are provided by the original software suppliers (commercial vendors or collaborative non commercial development teams), whilst in other cases facilities have been developed and are provided by third parties. Furthermore, many products offer software development kits (SDKs), programming languages and language support, scripting facilities and/or special interfaces for developing one's own analytical tools or variants. The website Geospatial Analysis and associated book/ebook attempt to provide a reasonably comprehensive guide to the subject.[19] The impact of these myriad paths to perform spatial analysis create a new dimension to business intelligence termed "spatial intelligence" which, when delivered via intranet, democratizes access to operational sorts not usually privy to this type of information.

Slope and aspect

Slope, aspect and surface curvature in terrain analysis are all derived from neighbourhood operations using elevation values of a cell's adjacent neighbours.[20] Authors such as Skidmore,[21] Jones[22] and Zhou and Liu[23] have compared techniques for calculating slope and aspect. Slope is a function of resolution, and the spatial resolution used to calculate slope and aspect should always be specified[24]

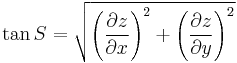

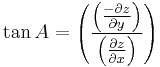

The elevation at a point will have perpendicular tangents (slope) passing through the point, in an east-west and north-south direction. These two tangents give two components, ∂z/∂x and ∂z/∂y, which then be used to determine the overall direction of slope, and the aspect of the slope. The gradient is defined as a vector quantity with components equal to the partial derivatives of the surface in the x and y directions.[25]

The calculation of the overall 3x3 grid slope and aspect for methods that determine east-west and north-south component use the following formulas respectively:

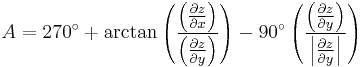

Zhou and Liu[23] describe another algorithm for calculating aspect, as follows:

Data analysis

It is difficult to relate wetlands maps to rainfall amounts recorded at different points such as airports, television stations, and high schools. A GIS, however, can be used to depict two- and three-dimensional characteristics of the Earth's surface, subsurface, and atmosphere from information points. For example, a GIS can quickly generate a map with isopleth or contour lines that indicate differing amounts of rainfall.

Such a map can be thought of as a rainfall contour map. Many sophisticated methods can estimate the characteristics of surfaces from a limited number of point measurements. A two-dimensional contour map created from the surface modeling of rainfall point measurements may be overlaid and analyzed with any other map in a GIS covering the same area.

Additionally, from a series of three-dimensional points, or digital elevation model, isopleth lines representing elevation contours can be generated, along with slope analysis, shaded relief, and other elevation products. Watersheds can be easily defined for any given reach, by computing all of the areas contiguous and uphill from any given point of interest. Similarly, an expected thalweg of where surface water would want to travel in intermittent and permanent streams can be computed from elevation data in the GIS.

Topological modeling

A GIS can recognize and analyze the spatial relationships that exist within digitally stored spatial data. These topological relationships allow complex spatial modelling and analysis to be performed. Topological relationships between geometric entities traditionally include adjacency (what adjoins what), containment (what encloses what), and proximity (how close something is to something else).

Networks

Geometric networks are linear networks of objects that can be used to represent interconnected features, and to perform special spatial analysis on them. A geometric network is composed of edges, which are connected at junction points, similar to graphs in mathematics and computer science. Just like graphs, networks can have weight and flow assigned to its edges, which can be used to represent various interconnected features more accurately. Geometric networks are often used to model road networks and public utility networks, such as electric, gas, and water networks. Network modeling is also commonly employed in transportation planning, hydrology modeling, and infrastructure modeling.

Hydrological Modeling

GIS hydrological models can provide a spatial element that other hydrological models lack, with the analysis of variables such as slope, aspect and watershed or catchment area.[26] Terrain analysis is fundamental to hydrology, since water always flows down a slope.[26] As basic terrain analysis of a digital elevation model (DEM) involves calculation of slope and aspect, DEMs are very useful for hydrological analysis. Slope and aspect can then be used to determine direction of surface runoff, and hence flow accumulation for the formation of streams, rivers and lakes. Areas of divergent flow can also give a clear indication of the boundaries of a catchment. Once a flow direction and accumulation matrix has been created, queries can be performed that show contributing or dispersal areas at a certain point.[26] More detail can be added to the model, such as terrain roughness, vegetation types and soil types, which can influence infiltration and evapotranspiration rates, and hence influencing surface flow. These extra layers of detail ensures a more accurate model. Also, check out GIS in Water Contamination and GIS in Environmental Contamination.

Cartographic modeling

The term "cartographic modeling" was (probably) coined by Dana Tomlin in his PhD dissertation and later in his book which has the term in the title. Cartographic modeling refers to a process where several thematic layers of the same area are produced, processed, and analyzed. Tomlin used raster layers, but the overlay method (see below) can be used more generally. Operations on map layers can be combined into algorithms, and eventually into simulation or optimization models.

Map overlay

The combination of several spatial datasets (points, lines or polygons) creates a new output vector dataset, visually similar to stacking several maps of the same region. These overlays are similar to mathematical Venn diagram overlays. A union overlay combines the geographic features and attribute tables of both inputs into a single new output. An intersect overlay defines the area where both inputs overlap and retains a set of attribute fields for each. A symmetric difference overlay defines an output area that includes the total area of both inputs except for the overlapping area.

Data extraction is a GIS process similar to vector overlay, though it can be used in either vector or raster data analysis. Rather than combining the properties and features of both datasets, data extraction involves using a "clip" or "mask" to extract the features of one data set that fall within the spatial extent of another dataset.

In raster data analysis, the overlay of datasets is accomplished through a process known as "local operation on multiple rasters" or "map algebra," through a function that combines the values of each raster's matrix. This function may weigh some inputs more than others through use of an "index model" that reflects the influence of various factors upon a geographic phenomenon.

Automated cartography

Digital cartography and GIS both encode spatial relationships in structured formal representations. GIS is used in digital cartography modeling as a (semi)automated process of making maps, so called Automated Cartography. In practice, it can be a subset of a GIS, within which it is equivalent to the stage of visualization, since in most cases not all of the GIS functionality is used. Cartographic products can be either in a digital or in a hardcopy format. Powerful analysis techniques with different data representation can produce high-quality maps within a short time period. The main problem in Automated Cartography is to use a single set of data to produce multiple products at a variety of scales, a technique known as cartographic generalization.

Geostatistics

Geostatistics is a point-pattern analysis that produces field predictions from data points. It is a way of looking at the statistical properties of those special data. It is different from general applications of statistics because it employs the use of graph theory and matrix algebra to reduce the number of parameters in the data. Only the second-order properties of the GIS data are analyzed.

When phenomena are measured, the observation methods dictate the accuracy of any subsequent analysis. Due to the nature of the data (e.g. traffic patterns in an urban environment; weather patterns over the Pacific Ocean), a constant or dynamic degree of precision is always lost in the measurement. This loss of precision is determined from the scale and distribution of the data collection.

To determine the statistical relevance of the analysis, an average is determined so that points (gradients) outside of any immediate measurement can be included to determine their predicted behavior. This is due to the limitations of the applied statistic and data collection methods, and interpolation is required to predict the behavior of particles, points, and locations that are not directly measurable.

Interpolation is the process by which a surface is created, usually a raster dataset, through the input of data collected at a number of sample points. There are several forms of interpolation, each which treats the data differently, depending on the properties of the data set. In comparing interpolation methods, the first consideration should be whether or not the source data will change (exact or approximate). Next is whether the method is subjective, a human interpretation, or objective. Then there is the nature of transitions between points: are they abrupt or gradual. Finally, there is whether a method is global (it uses the entire data set to form the model), or local where an algorithm is repeated for a small section of terrain.

Interpolation is a justified measurement because of a spatial autocorrelation principle that recognizes that data collected at any position will have a great similarity to, or influence of those locations within its immediate vicinity.

Digital elevation models (DEM), triangulated irregular networks (TIN), edge finding algorithms, Thiessen polygons, Fourier analysis, (weighted) moving averages, inverse distance weighting, kriging, spline, and trend surface analysis are all mathematical methods to produce interpolative data.

Address geocoding

Geocoding is interpolating spatial locations (X,Y coordinates) from street addresses or any other spatially referenced data such as ZIP Codes, parcel lots and address locations. A reference theme is required to geocode individual addresses, such as a road centerline file with address ranges. The individual address locations have historically been interpolated, or estimated, by examining address ranges along a road segment. These are usually provided in the form of a table or database. The GIS will then place a dot approximately where that address belongs along the segment of centerline. For example, an address point of 500 will be at the midpoint of a line segment that starts with address 1 and ends with address 1000. Geocoding can also be applied against actual parcel data, typically from municipal tax maps. In this case, the result of the geocoding will be an actually positioned space as opposed to an interpolated point. This approach is being increasingly used to provide more precise location information.

There are several potentially dangerous caveats that are often overlooked when using interpolation. See the full entry for Geocoding for more information.

Various algorithms are used to help with address matching when the spellings of addresses differ. Address information that a particular entity or organization has data on, such as the post office, may not entirely match the reference theme. There could be variations in street name spelling, community name, etc. Consequently, the user generally has the ability to make matching criteria more stringent, or to relax those parameters so that more addresses will be mapped. Care must be taken to review the results so as not to map addresses incorrectly due to overzealous matching parameters.

Reverse geocoding

Reverse geocoding is the process of returning an estimated street address number as it relates to a given coordinate. For example, a user can click on a road centerline theme (thus providing a coordinate) and have information returned that reflects the estimated house number. This house number is interpolated from a range assigned to that road segment. If the user clicks at the midpoint of a segment that starts with address 1 and ends with 100, the returned value will be somewhere near 50. Note that reverse geocoding does not return actual addresses, only estimates of what should be there based on the predetermined range.

Data output and cartography

Cartography is the design and production of maps, or visual representations of spatial data. The vast majority of modern cartography is done with the help of computers, usually using a GIS but production quality cartography is also achieved by importing layers into a design program to refine it. Most GIS software gives the user substantial control over the appearance of the data.

Cartographic work serves two major functions:

First, it produces graphics on the screen or on paper that convey the results of analysis to the people who make decisions about resources. Wall maps and other graphics can be generated, allowing the viewer to visualize and thereby understand the results of analyses or simulations of potential events. Web Map Servers facilitate distribution of generated maps through web browsers using various implementations of web-based application programming interfaces (AJAX, Java, Flash, etc.).

Second, other database information can be generated for further analysis or use. An example would be a list of all addresses within one mile (1.6 km) of a toxic spill.

Graphic display techniques

Traditional maps are abstractions of the real world, a sampling of important elements portrayed on a sheet of paper with symbols to represent physical objects. People who use maps must interpret these symbols. Topographic maps show the shape of land surface with contour lines or with shaded relief.

Today, graphic display techniques such as shading based on altitude in a GIS can make relationships among map elements visible, heightening one's ability to extract and analyze information. For example, two types of data were combined in a GIS to produce a perspective view of a portion of San Mateo County, California.

- The digital elevation model, consisting of surface elevations recorded on a 30-meter horizontal grid, shows high elevations as white and low elevation as black.

- The accompanying Landsat Thematic Mapper image shows a false-color infrared image looking down at the same area in 30-meter pixels, or picture elements, for the same coordinate points, pixel by pixel, as the elevation information.

A GIS was used to register and combine the two images to render the three-dimensional perspective view looking down the San Andreas Fault, using the Thematic Mapper image pixels, but shaded using the elevation of the landforms. The GIS display depends on the viewing point of the observer and time of day of the display, to properly render the shadows created by the sun's rays at that latitude, longitude, and time of day.

An archeochrome is a new way of displaying spatial data. It is a thematic on a 3D map that is applied to a specific building or a part of a building. It is suited to the visual display of heat loss data.

Spatial ETL

Spatial ETL tools provide the data processing functionality of traditional Extract, Transform, Load (ETL) software, but with a primary focus on the ability to manage spatial data. They provide GIS users with the ability to translate data between different standards and proprietary formats, whilst geometrically transforming the data en-route.

GIS developments

Many disciplines can benefit from GIS technology. An active GIS market has resulted in lower costs and continual improvements in the hardware and software components of GIS. These developments will, in turn, result in a much wider use of the technology throughout science, government, business, and industry, with applications including real estate, public health, crime mapping, national defense, sustainable development, natural resources, landscape architecture, archaeology, regional and community planning, transportation and logistics. GIS is also diverging into location-based services (LBS). LBS allows GPS enabled mobile devices[27] to display their location in relation to fixed assets (nearest restaurant, gas station, fire hydrant), mobile assets (friends, children, police car) or to relay their position back to a central server for display or other processing. These services continue to develop with the increased integration of GPS functionality with increasingly powerful mobile electronics (cell phones, PDAs, laptops).

OGC standards

The Open Geospatial Consortium (OGC) is an international industry consortium of 384 companies, government agencies, universities and individuals[28] participating in a consensus process to develop publicly available geoprocessing specifications. Open interfaces and protocols defined by OpenGIS Specifications support interoperable solutions that "geo-enable" the Web, wireless and location-based services, and mainstream IT, and empower technology developers to make complex spatial information and services accessible and useful with all kinds of applications. Open Geospatial Consortium (OGC) protocols include Web Map Service (WMS) and Web Feature Service (WFS).

GIS products are broken down by the OGC into two categories, based on how completely and accurately the software follows the OGC specifications.

Compliant Products are software products that comply to OGC's OpenGIS Specifications. When a product has been tested and certified as compliant through the OGC Testing Program, the product is automatically registered as "compliant" on this site.

Implementing Products are software products that implement OpenGIS Specifications but have not yet passed a compliance test. Compliance tests are not available for all specifications. Developers can register their products as implementing draft or approved specifications, though OGC reserves the right to review and verify each entry.

Web mapping

In recent years there has been an explosion of mapping applications on the web such as Google Maps and Bing Maps. These websites give the public access to huge amounts of geographic data.

Some of them, like Google Maps and OpenLayers, expose an API that enable users to create custom applications. These toolkits commonly offer street maps, aerial/satellite imagery, geocoding, searches, and routing functionality.

Other applications for publishing geographic information on the web include GeoBase (Telogis GIS software), Smallworld's SIAS or GSS, MapInfo's MapXtreme or PlanAcess[29] or Stratus Connect, Cadcorp's GeognoSIS, Intergraph's GeoMedia WebMap (TM), ESRI's ArcIMS, ArcGIS Server, Autodesk's MapGuide, Bentley's Geo Web Publisher, SeaTrails' AtlasAlive, ObjectFX's Web Mapping Tools, ERDAS APOLLO Suite, Google Earth, Google Fusion Tables, and the open source MapServer, MiraMon Web Map Server or GeoServer.

In recent years web mapping services have begun to adopt features more common in GIS. Services such as Google Maps and Bing Maps allow users to access and annotate maps and share the maps with others.

Service-oriented architecture

Recently GIS systems have begun to migrate from stand-alone GIS systems to more integrated, enterprise approaches that share resources, data, and applications using a service-oriented architecture (SOA). This allows application developers to create flexible and extensible GIS that can quickly respond to changing and future organizational needs.

Global change, climate history program and prediction of its impact

Maps have traditionally been used to explore the Earth and to exploit its resources. GIS technology, as an expansion of cartographic science, has enhanced the efficiency and analytic power of traditional mapping. Now, as the scientific community recognizes the environmental consequences of anthropogenic activities influencing climate change, GIS technology is becoming an essential tool to understand the impacts of this change over time. GIS enables the combination of various sources of data with existing maps and up-to-date information from earth observation satellites along with the outputs of climate change models. This can help in understanding the effects of climate change on complex natural systems. One of the classic examples of this is the study of Arctic Ice Melting.

The outputs from a GIS in the form of maps combined with satellite imagery allow researchers to view their subjects in ways that literally never have been seen before. The images are also invaluable for conveying the effects of climate change to non-scientists.

Adding the dimension of time

The condition of the Earth's surface, atmosphere, and subsurface can be examined by feeding satellite data into a GIS. GIS technology gives researchers the ability to examine the variations in Earth processes over days, months, and years.

As an example, the changes in vegetation vigor through a growing season can be animated to determine when drought was most extensive in a particular region. The resulting graphic, known as a normalized vegetation index, represents a rough measure of plant health. Working with two variables over time would then allow researchers to detect regional differences in the lag between a decline in rainfall and its effect on vegetation.

GIS technology and the availability of digital data on regional and global scales enable such analyses. The satellite sensor output used to generate a vegetation graphic is produced for example by the Advanced Very High Resolution Radiometer (AVHRR). This sensor system detects the amounts of energy reflected from the Earth's surface across various bands of the spectrum for surface areas of about 1 square kilometer. The satellite sensor produces images of a particular location on the Earth twice a day. AVHRR and more recently the Moderate-Resolution Imaging Spectroradiometer (MODIS) are only two of many sensor systems used for Earth surface analysis. More sensors will follow, generating ever greater amounts of data.

GIS and related technology will help greatly in the management and analysis of these large volumes of data, allowing for better understanding of terrestrial processes and better management of human activities to maintain world economic vitality and environmental quality.

In addition to the integration of time in environmental studies, GIS is also being explored for its ability to track and model the progress of humans throughout their daily routines. A concrete example of progress in this area is the recent release of time-specific population data by the US Census. In this data set, the populations of cities are shown for daytime and evening hours highlighting the pattern of concentration and dispersion generated by North American commuting patterns. The manipulation and generation of data required to produce this data would not have been possible without GIS.

Using models to project the data held by a GIS forward in time have enabled planners to test policy decisions. These systems are known as Spatial Decision Support Systems.

Semantics

Tools and technologies emerging from the W3C's Semantic Web Activity are proving useful for data integration problems in information systems. Correspondingly, such technologies have been proposed as a means to facilitate interoperability and data reuse among GIS applications[30][31] and also to enable new analysis mechanisms.[32]

Ontologies are a key component of this semantic approach as they allow a formal, machine-readable specification of the concepts and relationships in a given domain. This in turn allows a GIS to focus on the intended meaning of data rather than its syntax or structure. For example, reasoning that a land cover type classified as deciduous needleleaf trees in one dataset is a specialization or subset of land cover type forest in another more roughly classified dataset can help a GIS automatically merge the two datasets under the more general land cover classification. Tentative ontologies have been developed in areas related to GIS applications, for example the hydrology ontology developed by the Ordnance Survey in the United Kingdom and the SWEET ontologies developed by NASA's Jet Propulsion Laboratory. Also, simpler ontologies and semantic metadata standards are being proposed by the W3C Geo Incubator Group to represent geospatial data on the web.

Recent research results in this area can be seen in the International Conference on Geospatial Semantics and the Terra Cognita – Directions to the Geospatial Semantic Web workshop at the International Semantic Web Conference.

Society

With the popularization of GIS in decision making, scholars have begun to scrutinize the social implications of GIS. It has been argued that the production, distribution, utilization, and representation of geographic information are largely related with the social context. Other related topics include discussion on copyright, privacy, and censorship. A more optimistic social approach to GIS adoption is to use it as a tool for public participation.

See also

References

Footnotes

- ^ "Geographic Information Systems as an Integrating Technology: Context, Concepts, and Definitions". ESRI. http://www.colorado.edu/geography/gcraft/notes/intro/intro.html. Retrieved 9 June 2011.

- ^ Clarke, K. C., 1986. Advances in geographic information systems, computers, environment and urban systems, Vol. 10, pp. 175–184.

- ^ Goodchild, Michael F (2010). "Twenty years of progress: GIScience in 2010". Journal of Spatial Information Science. doi:10.5311/JOSIS.2010.1.2.

- ^ "John Snow's Cholera Map". York University. http://www.york.ac.uk/depts/maths/histstat/snow_map.htm. Retrieved 2007-06-09.

- ^ Fitzgerald, Joseph H.. "Map Printing Methods". Archived from the original on 2007-06-04. http://web.archive.org/web/20070604194024/http://www.broward.org/library/bienes/lii14009.htm. Retrieved 2007-06-09.

- ^ "GIS Hall of Fame – Roger Tomlinson". URISA. http://www.urisa.org/node/395. Retrieved 2007-06-09.

- ^ Lovison-Golob, Lucia. "Howard T. Fisher". Harvard University. http://www.gis.dce.harvard.edu/fisher/HTFisher.htm. Retrieved 2007-06-09.

- ^ "Open Source GIS History – OSGeo Wiki Editors". http://wiki.osgeo.org/wiki/Open_Source_GIS_History. Retrieved 2009-03-21.

- ^ Fu, P., and J. Sun. 2010. Web GIS: Principles and Applications. ESRI Press. Redlands, CA. ISBN 158948245X.

- ^ Tim Foresman 1997 The History of GIS (Geographic Information Systems): Perspectives from the Pioneers. (Prentice Hall Series in Geographic Information Science) Prentice Hall PTR; 1st edition (November 10, 1997), 416 p.

- ^ Coppock, J. T., and D. W. Rhind, (1991). The history of GIS. Geographical Information Systems: principles and applications. Ed. David J. Maguire, Michael F. Goodchild and David W. Rhind. Essex: Longman Scientific & Technical, 1991. 1: 21–43."The history of GIS.". http://scholar.google.com/scholar?cluster=13820827634229141183&hl=en&as_sdt=10000000000000. Retrieved 2010-09-17.

- ^ Cowen 1988 "GIS VERSUS CAD VERSUS DBMS: WHAT ARE THE DIFFERENCES ?" PHOTOGRAMMETRIC ENGINEERING & REMOTE SENSING Vol. 54, No.11, November 1988, pp. 1551–1555. http://funk.on.br/esantos/doutorado/GEO/igce/DBMS.pdf last retrieved 9/17/2010.

- ^ http://www.fgdc.gov/standards/projects/FGDC-standards-projects/accuracy/part3/chapter3.

- ^ https://njgin.state.nj.us/NJ_NJGINExplorer/IW.jsp.

- ^ http://nationalmap.gov/gio/standards.

- ^ http://gis.ednet.ns.ca/gis_uses_in_US.htm

- ^ Gao, Shan. Paynter, John. & David Sundaram, (2004) "Flexible Support for Spatial Decision-Making" Proc. of the 37th Hawaii International Conference on System Sciences 5–8 pp. 10

- ^ http://www.aeryon.com/news/pressreleases/248-softwareversion5.html

- ^ Geospatial Analysis – a comprehensive guide. 2nd edition © 2006–2008 de Smith, Goodchild, Longley

- ^ Chang, K. T. (2008). Introduction to Geographical Information Systems. New York: McGraw Hill. p. 184.

- ^ Chang, K. T. (1989). "A comparison of techniques for calculating gradient and aspect from a gridded digital elevation model". International Journal of Geographical Information Science 3 (4): 323–334. doi:10.1080/02693798908941519.

- ^ Jones, K.H. (1998). "A comparison of algorithms used to compute hill slope as a property of the DEM". Computers and Geosciences 24 (4): 315–323. doi:10.1016/S0098-3004(98)00032-6.

- ^ a b Zhou, Q. and Liu, X. (2003). "Analysis of errors of derived slope and aspect related to DEM data properties". Computers and Geosciences 30: 269–378.

- ^ Longley, P. A., Goodchild, M. F., McGuire, D. J., and Rhind, D. W. (2005). Analysis of errors of derived slope and aspect related to DEM data properties. West Sussex, England: John Wiley and Sons. 328.

- ^ Hunter G. J. and Goodchild M. F. (1997). "Modeling the uncertainty of slope and aspect estimates derived from spatial databases". Geographical Analysis 29 (1): 35–49. doi:10.1111/j.1538-4632.1997.tb00944.x. http://www.geog.ucsb.edu/~good/papers/261.pdf.

- ^ a b c Heywood, I., Cornelius, S., & Carver, S. (2006). An Introduction to Geographical Information Systems (3rd ed.). Essex, England: Prentice Hall.

- ^ Mobile GIS & LBS http://www.webmapsolutions.com/mobile-arcgis-paper-gps-data-collection

- ^ http://www.opengeospatial.org/ogc/members

- ^ http://www.cdrgroup.co.uk/index.htm?sales_mi_prod_planaccess.htm

- ^ Fonseca, Frederico; Sheth, Amit (2002). "The Geospatial Semantic Web" (PDF). UCGIS White Paper. http://www.personal.psu.edu/faculty/f/u/fuf1/Fonseca-Sheth.pdf

- ^ Fonseca, Frederico; Egenhofer, Max (1999). "Ontology-Driven Geographic Information Systems". Proc. ACM International Symposium on Geographic Information Systems. pp. 14–19

- ^ Perry, Matthew; Hakimpour, Farshad; Sheth, Amit (2006). "Analyzing Theme, Space and Time: an Ontology-based Approach" (PDF). Proc. ACM International Symposium on Geographic Information Systems. pp. 147–154. http://knoesis.wright.edu/library/download/ACM-GIS_06_Perry.pdf

Some retrieved from Federal Geographic Data Committee

Notations

- IGRS-GIS Institute of Geoinformatics & Remote Sensing

Further reading

- Berry, J.K. (1993) Beyond Mapping: Concepts, Algorithms and Issues in GIS. Fort Collins, CO: GIS World Books.

- Bolstad, P. (2005) GIS Fundamentals: A first text on Geographic Information Systems, Second Edition. White Bear Lake, MN: Eider Press, 543 pp.

- Burrough, P.A. and McDonnell, R.A. (1998) Principles of geographical information systems. Oxford University Press, Oxford, 327 pp.

- Chang, K. (2007) Introduction to Geographic Information System, 4th Edition. McGraw Hill.

- Coulman, Ross (2001–present) Numerous GIS White Papers

- de Smith M J, Goodchild M F, Longley P A (2007) Geospatial analysis: A comprehensive guide to principles, techniques and software tools", 2nd edition, Troubador, UK available free online at: [1]

- Elangovan,K (2006)"GIS: Fundamentals, Applications and Implementations", New India Publishing Agency, New Delhi"208 pp.

- Fu, P., and J. Sun. 2010. Web GIS: Principles and Applications. ESRI Press. Redlands, CA. ISBN 158948245X.

- Harvey, Francis(2008) A Primer of GIS, Fundamental geographic and cartographic concepts. The Guilford Press, 31 pp.

- Heywood, I., Cornelius, S., and Carver, S. (2006) An Introduction to Geographical Information Systems. Prentice Hall. 3rd edition.

- Longley, P.A., Goodchild, M.F., Maguire, D.J. and Rhind, D.W. (2005) Geographic Information Systems and Science. Chichester: Wiley. 2nd edition.

- Maguire, D.J., Goodchild M.F., Rhind D.W. (1997) "Geographic Information Systems: principles, and applications" Longman Scientific and Technical, Harlow.

- Ott, T. and Swiaczny, F. (2001) Time-integrative GIS. Management and analysis of spatio-temporal data, Berlin / Heidelberg / New York: Springer.

- Sajeevan G (2008) Latitude and longitude – A misunderstanding, Current Science: March 2008. Vol 94. No 5. 568 pp. Available online at: [2]

- Sajeevan G (2006) Customise and empower, www.geospatialtoday.com: April 2006. 40 pp.

- Thurston, J., Poiker, T.K. and J. Patrick Moore. (2003) Integrated Geospatial Technologies: A Guide to GPS, GIS, and Data Logging. Hoboken, New Jersey: Wiley.

- Tomlinson, R.F., (2005) Thinking About GIS: Geographic Information System Planning for Managers. ESRI Press. 328 pp.

- Wise, S. (2002) GIS Basics. London: Taylor & Francis.

- Worboys, Michael, and Matt Duckham. (2004) GIS: a computing perspective. Boca Raton: CRC Press.

- Wheatley, David and Gillings, Mark (2002) Spatial Technology and Archaeology. The Archaeological Application of GIS. London, New York, Taylor & Francis.

External links

- Association of Geographic Information Laboratories for Europe (AGILE) – promoting academic teaching and research on GIS at the European level

- Cartography and Geographic Information Society (CaGIS)

- Directions Magazine – All Things Location

- Federal Geographic Data Committee—United States federal government standards agency.

- Geographic Information System (GIS) Educational website—Educational site with PDF lessons and videos to accompany free GIS software.

- GIS Development – The Geospatial Communication Network

- GIS Lounge Information site about GIS.

- GISWiki.NEWS.Reader – Searchable feed aggregator for a large collection of GIS news, mostly in English.

- GITA – Geospatial Information & Technology Association.

- International Cartographic Association (ICA), the world body for mapping and GIScience professionals

- National States Geographic Information Council (NSGIC)

- Open Forum on Participatory Geographic Information Systems and Technologies – a global network of PGIS/PPGIS English-speaking practitioners and researchers with Spanish, Portuguese and French-speaking chapters.

- Open Geospatial Consortium, Inc.

- Open Source Geospatial Foundation

- USGS GIS Poster—Frequently cited "What is GIS" poster.